|

NetKernel News Volume 2 Issue 35

July 1st 2011

What's new this week?

Catch up on last week's news here

Repository Updates

The following updates are available in the NKEE and NKSE repositories...

- system-core 0.28.1

- Added active:userMetadataAggregator (see below)

The following *new* tools and demos are available (see below)...

- demo-meta-params 1.1.1

- A micro-billing dynamic user metadata demo.

- meta-todo 1.1.1

- A todo endpoint annotation tool demonstrating metadata aggregation.

The following packages were updated to add support for an optional <meta> parameter declaration on prototype instances.

- lang-beanshell 1.4.1

- lang-groovy 1.9.1

- lang-javascript 1.5.1

- lang-python 1.5.1

- layer1 1.31.1

Parameterised User Metadata

A couple of weeks ago we discussed the relationship between ROC and metadata. In the second part I highlighted some of the patterns for contextually associating metadata resources and, specifically for today's story, it discussed parameterised metadata resources.

It made a bunch of vapourware promises about 'user-extensible metadata parameters' for endpoints in NetKernel. Well, the vapour has condensed...

Amongst the updates we shipped last week were some enhancements to the NKF base classes to support an arbitrary extensible meta parameter. Here, for example, is an accessor with some user associated metadata...

<grammar>res:/foo/bar</grammar>

<class>foo.bar.Baz</class>

<meta>

<cleaning>

<care>Dry Clean on Tuesday's</care>

<washcycle>Woollen Delicates</washcycle>

</cleaning>

</meta>

</accessor>

Notice the <meta> parameter. You can put absolutely anything you like in here - as discussed previously, it supports arbitrary user specified metadata.

You can put a <meta> tag on any logical endpoint - which includes regular accessor declarations (as shown above) but also on mapper endpoint declarations.

(Incidentally a year or so ago we discussed the distinction between arguments and parameters - if you're not sure it might be worth a read)

Aggregation Tool

This week we've shipped a new active:userMetaAggregator tool in the ext-system module. This provides a superset aggregated resource with the contextually associated view of the metadata and its related endpoint. The documentation for the tool is here...

http://docs.netkernel.org/book/view/book:ext:system:book/doc:ext:system:usermeta

But its more useful to see its use in some real working examples...

Demonstrations

We didn't make a big fanfare announcement about this capability last week because it really needs some examples to show the possibilities that it opens up. However, this week we've got around to creating a couple of demos showing two metadata applications exploiting orthogonal metadata aggregation patterns. Along the way this has resulted in a, possibly useful, tool for your development project management...

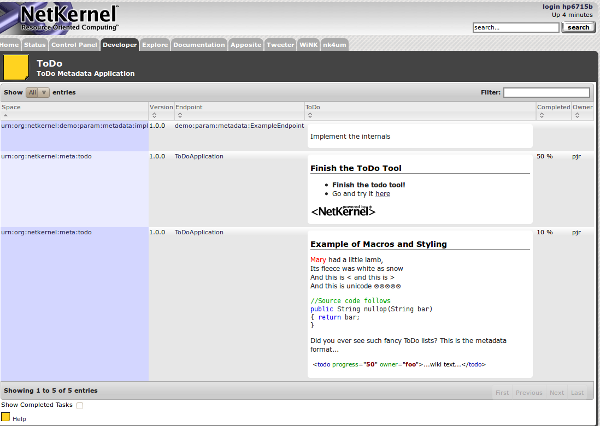

ToDo Metadata Tool

The first demonstration is that old stalwart of IDEs, a ToDo list. Its documentation for adding endpoint todo annotations is here. You can install it from the repositories with apposite as the package: meta-todo (make sure your system has all the recent low-level updates too).

It uses a dynamic import hook to pull itself into the Developer control panel and provides a sortable/filterable view of all the todo items in your system. Each item has its associated space and endpoint listed and cross-linked into the space explorer (breadcrumbs back to the primary resource). The ToDo entry is wiki text and supports the full range of documentation macros...

The ToDo tool obtains the full system-wide user metadata resource aggregation and then selects the subset of the user metadata marked by a <todo> tag.

So to add a ToDo item to an endpoint you'd do this...

<grammar>res:/foo/bar</grammar>

<class>foo.bar.Baz</class>

<meta>

<todo progress="50" owner="pjr"> ==Finish the ToDo Tool== *'''Finish the todo tool!''' *Go and try it [http://localhost:1060/tools/meta/todo|here] </todo>

</meta>

</accessor>

This <todo> tag is shown rendered by the tool in the screenshot (above) as the 2nd item in the list.

The added bonus is that the text content of the <todo> tag is treated as wiki text and is parsed. The power of this is not that you can do fancy styled todo messages - but, since NetKernel itself is a resource oriented system, you can include links to other tools (like the space explorer, unit tests, documentation, etc etc). So your todo entries can become contextual foci for group developer communication.

Incidentally, all the regular NK documentation wiki macros are supported - so you can have todos with highlighted source code snippets, xml, images etc etc, (as illustrated in the screenshot above).

After you've installed the todo tool you'll find it in an expanded directory

modules/urn.org.netkernel.meta.todo-x.x.x/

The app uses an outer xrl template containing an xrl:include request for the groovy runtime to generate the ToDo table. Here's the main source code..

//Request the aggregate user metadata resource (ensure we normalize to only show origin) req=context.createRequest("active:userMetaAggregator") req.addArgument("originonly", "true") rep=context.issueRequest(req) //Transform todo's into a table (the transform styles the todo text //entry into an embbeded xrl:include to parse the wiki, which gets evaluated by //the ongoing xrl recursion). req=context.createRequest("active:xsltc") req.addArgumentByValue("operand", rep) req.addArgument("operator", "res:/resources/style-todo.xsl") rep=context.issueRequest(req) context.createResponseFrom(rep);

Errrr. That's it. Its just a composite ROC application that leverages existing tools. All we really needed to do was think up a suitable structure for the todo metadata.

Pattern

The ToDo tool illustrates a pattern of sub-setting the global aggregate user metadata.

The ToDo application is really a pretty lame "hello world" just to show the possibilities of using the global aggregate user metadata. In the next example we're going to look at the value within an application to provide non-functional architectural capabilities.

Micro-Billing Software

Here we'll examine a demo in which micro-billing metadata is associated with a set of services and each use of a service is billed to an internal corporate accounting system. The application domain is just for illustration, the patterns discussed could be applied to many many scenarios (eg access control, logging, service management, transport performance tuning, transport service configuration (eg REST JSR-311 style patterns), dynamic routing... and ultimately, dynamically self-composing systems).

Install / Try It

First off go to apposite and install the package demo-meta-params (make sure your system has all the recent low-level updates too). After you've installed you'll find an expanded module in the directory

modules/urn.org.netkernel.demo.param.metadata-x.x.x/

The demo can be run by using its unit tests...

http://localhost:1060/test/exec/html/test:urn:org:netkernel:demo:param:metadata

When you run the tests you'll see the following output in the stdout/logs...

I 09:22:12 TestEngineEn~ Test Complete [Service metadata] [success] [5] I 09:22:12 TestEngineEn~ Assert Complete [xpath] [/spaces/space/id='urn:org:netkernel:demo:param:metadata:impl'] [success] I 09:22:12 TestEngineEn~ Assert Complete [xpath] [/spaces/space/elements/element/meta] [success] I 09:22:12 TestEngineEn~ Assert Complete [xpath] [/spaces/space/elements/element[id='demo:param:metadata:ExampleEndpoint']/meta/dev/projectid='MEGACORP-PID-456123-XSZ'] [success] I 09:22:12 TestEngineEn~ Assert Complete [xpath] [/spaces/space/elements/element[id='demo:param:metadata:ExampleEndpoint']/meta/commerce/billing-code='PAYG-123456-4567-COST'] [success] *****************KERCHING******************** Time: Thu Jun 30 09:22:12 BST 2011 Payee Billing Id: TESTREQ-123456-7890 Credit To Project: MEGACORP-PID-456123-XSZ Billing Code: PAYG-123456-4567-COST Item: Example Service PAYG Amount: 0.01 ******************************************** I 09:22:12 TestEngineEn~ Test Complete [Test Service 1] [success] [1] I 09:22:12 TestEngineEn~ Assert Complete [stringEquals] [Hello] [success] *****************KERCHING******************** Time: Thu Jun 30 09:22:12 BST 2011 Payee Billing Id: TESTREQ-123456-7890 Credit To Project: MEGACORP-PID-456123-XSZ Billing Code: PAYG-123456-891011-COST Item: Expensive Service PAYG - 2 Amount: 0.23 ******************************************** I 09:22:12 TestEngineEn~ Test Complete [Test Service 2] [success] [2]

You can see that the the unit tests are sequentially calling two services in the implementation space. But each time they are called there is a "KERCHING" micro-billing transaction happening.

If you repeat the tests you'll see that Service 1 has a static fixed price. But that Service 2 has a dynamic spot price which is fluctuating each time you request it.

We'll see that the micro-billing is implemented using a local aggregated metadata pattern. And, since metadata is just a resource, we can easily use dynamic patterns with dynamic metadata.

Tour of the Module

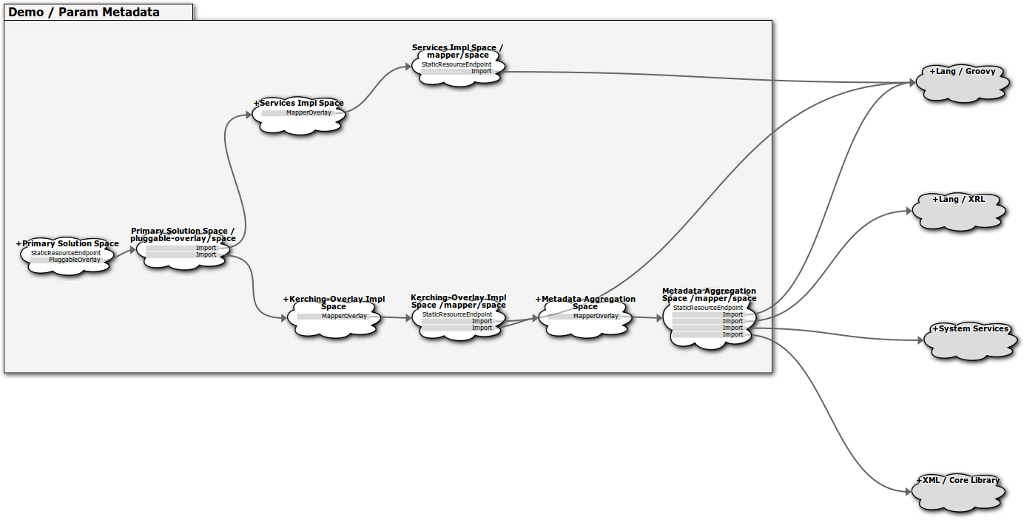

If you look at the module.xml you'll find it contains six rootspaces. The last two are just for unit testing and documentation so we'll ignore those. Here's a diagram of the spacial architecture embodied in the first four rootspaces (there's an SVG version here which may be clearer)...

The "main application" consists of the two "Service 1" and "Service 2" services which are just dummy services implemented in the "Services Impl Space" (the second rootspace in the module.xml and shown as the top two spaces in the diagram).

From the diagram we can see that the "Service Impl" space is imported into the "Primary Solution Space" and wrapped by a pluggable-overlay. This pluggable overlay is intercepting all requests to the services and performs the micro-billing. Here's the rootspace declaration...

<fileset>

<regex>res:/etc/system/SimpleDynamicImportHook.xml</regex>

</fileset>

<pluggable-overlay>

<preProcess>

<identifier>active:Kerching</identifier>

<argument name="request">arg:request</argument>

</preProcess>

<space>

<import>

<uri>urn:org:netkernel:demo:param:metadata:impl</uri>

</import>

<import>

<uri>urn:org:netkernel:demo:param:metadata:kerching</uri>

</import>

</space>

</pluggable-overlay>

</rootspace>

You can see that that pluggable-overlay makes a request to active:Kerching for every request that is resolved to the imported services inside the overlay's wrapped space. (ie every time we request Service1 or Service2 we trigger a request to the Kerching service).

So lets take a look at the third rootspace called "Kerching Overlay Impl" which is also imported into the Primary Solution Space. This is where the active:Kerching is implemented. You can see its done with Groovy and the code is in res:/resources/endpoints/kerching.gy. The first few lines are...

import org.netkernel.layer0.representation.* import org.netkernel.layer0.representation.impl.* //Source the outer request outer=context.source("arg:request") //Process the outer requests billingcode header - this would be crypto signed etc billingcode=outer.getHeaderValue("BILLINGCODE") if(billingcode==null) { throw new Exception("Attempt to invoke Kerchingable Service with no billing code") }

As with any pluggable-overlay, we receive the outer request (which has been resolved to the inner wrapped space) as an argument - so to begin with we source the "arg:request" and assign this to the variable "outer".

Next we examine the outer request and look for a request header. Any request within or between NK systems can specify arbitrary user-specified request headers. Here we've invented the name "BILLINGCODE" and which must have a valid billing code as its value. We'll assume this is sufficient for us to be able to debit the requestor's billing account - obviously if this were not just a simple demo we'd also potentially encrypt this and/or have signature for the request etc. But for now lets just take it as read that the outer request must have a BILLINGCODE header- if it doesn't we throw an exception and the request is denied.

OK next we need to know which of the wrapped endpoints the outer request has been resolved to. We do that like this...

//What is the endpoint we have resolved to?

target=outer.getResolvedElementId()

So now we know the identity of the target endpoint (it'll be either Service1 or Service2 in this case).

Next we need to know the commerce metadata for the endpoint so that we can charge the user. So we finally see where the metadata comes into play. The kerching implementation makes a request for the resource "active:LocalMetadataAggregation"...

//Get metadata for the target endpoint meta=context.source("active:LocalMetadataAggregation", IHDSInversion.class)

Notice it also asks for it in the form of an IHDSInversion. So lets pause our discussion here a moment and switch horses to look at the implementation of this local metadata resource.

Local Metadata Aggregation

If we look at the fourth rootspace we see the "Metadata Aggregation Space" - we can see from the structure diagram above that this space is imported by the "Kerching Overlay Impl" space - so the call in kerching.gy will be resolved to the service in this space.

You can see that active:LocalMetadataAggregation is also implemented with Groovy and is the script res:/resources/meta/aggregator.gy which looks like this...

//Source the user metadata in the impl space req=context.createRequest("active:userMetaAggregator") req.addArgument("space", "urn:org:netkernel:demo:param:metadata:impl") rep=context.issueRequest(req) //Evaluate any xrl:includes embedded in the metadata req=context.createRequest("active:xrl2") req.addArgumentByValue("template", rep) rep=context.issueRequest(req) context.createResponseFrom(rep);

OK this looks familiar, its pretty similar to the ToDo implementation from earlier. Firstly it requests active:userMetaAggregator, but notice that this time it provides the space argument and indicates that we're only interested in the user metadata in the space urn:org:netkernel:demo:param:metadata:impl (which will be the user metadata for Service 1 and Service 2).

Secondly, notice that we now evaluate the metadata resource in the XRL runtime. What's all that about?

OK, its time to get down to details and look at the format of the metadata that we've invented for this application architecture. Take another look at the second rootspace "Services Impl" and inparticular at the endpoint declarations for our two dummy services in the mapper config. Here's the <meta> tag of the first "Service 1" endpoint...

<dev>

<author>PJR</author>

<project>Demo Project</project>

<projectid>MEGACORP-PID-456123-XSZ</projectid>

</dev>

<commerce>

<billing-code>PAYG-123456-4567-COST</billing-code>

<billing-line-item>Example Service PAYG</billing-line-item>

<price>0.01</price>

</commerce>

</meta>

So we've invented a <dev> structure with some project developer fields, especially a projectid - which presumably is meaningful in our billing system. We've also added a <commerce> structure with a billing code, line item and a price field. So this is the metadata that the kerching overlay is going to use to bill for the use of this service. (Bear in mind this is a simple demo for illustration of the pattern - don't worry about the specifics of if this billing metadata is really a typical example).

Next take a look at the "Service 2" endpoint. Its meta tag looks like this...

<xrl:include xmlns:xrl="http://netkernel.org/xrl">

<xrl:xpath>../../meta</xrl:xpath>

<xrl:identifier>res:/resources/meta/external-ref-metadata.xml</xrl:identifier>

</xrl:include>

</meta>

We've embedded an xrl:include request in the metadata. Ah ha! So that's why active:LocalMetadataAggregation is requesting xrl - its dereferencing the linked metadata resources. So what do we see if we look at Service2's xrl:include, it is requesting

res:/resources/meta/external-ref-metadata.xml

which looks like this...

<dev>

<author>PJR</author>

<project>Demo Project</project>

<projectid>MEGACORP-PID-456123-XSZ</projectid>

</dev>

<commerce>

<billing-code>PAYG-123456-891011-COST</billing-code>

<billing-line-item>Expensive Service PAYG - 2</billing-line-item>

<xrl:include>

<xrl:identifier>active:groovy</xrl:identifier>

<xrl:argument name="operator">res:/resources/meta/dynamic-price.gy</xrl:argument>

</xrl:include>

</commerce>

<scheduling-priority>200%</scheduling-priority>

<completeness>50%</completeness>

<delivery>

<target>2011-08-11</target>

<expected>2011-08-11</expected>

</delivery>

<exceptionNotificationList>

<email>pjr@1060.org</email>

</exceptionNotificationList>

</meta>

Hmmm this looks similar to Service 1. Although, for illustration, we've added some additional "fantasy metadata" for imaginary metadata driven architecture tools - a scheduling priority request level "nice" tool, a project delivery target date, and an exception handler overlay that emails the developer if ever this service is in production and throws an uncaught exception. (I told you parameterised user metadata opens up a world of potential)...

But back to reality, look at the <dev> and <commerce> tags which our kerching layer needs. Notice that this is very similar to the static example on Service 1, just different values for the billing code etc.

But notice that slipped into the commerce tag is another xrl:include. This time its for the resource that is the dynamic spot-price for the service (I know, it may look like a groovy script request, and that's how its implemented, but you're not thinking ROC, its actually an identifier for the resource that's the spot-price of this service).

So now you see how the price of service 2 is made to dynamically vary (we won't discuss the dynamic price implementation its just a dumb script).

You can examine active:LocalMetadataAggregation by running the first unit test here (request it a few times to see the price vary)...

http://localhost:1060/test/exec/html/test:urn:org:netkernel:demo:param:metadata?index=1

It'll look something like this...

<space>

<id>urn:org:netkernel:demo:param:metadata:impl</id>

<hash>7F/N</hash>

<version>1.0.0</version>

<elements>

<element>

<id>demo:param:metadata:ExampleEndpoint</id>

<hash>7F/N/demo:param:metadata:ExampleEndpoint</hash>

<meta>

<dev>

<author>PJR</author>

<project>Demo Project</project>

<projectid>MEGACORP-PID-456123-XSZ</projectid>

</dev>

<commerce>

<billing-code>PAYG-123456-4567-COST</billing-code>

<billing-line-item>Example Service PAYG</billing-line-item>

<price>0.01</price>

</commerce>

</meta>

</element>

<element>

<id>demo:param:metadata:ExampleEndpoint2</id>

<hash>7F/N/demo:param:metadata:ExampleEndpoint2</hash>

<meta>

<dev>

<author>PJR</author>

<project>Demo Project</project>

<projectid>MEGACORP-PID-456123-XSZ</projectid>

</dev>

<commerce>

<billing-code>PAYG-123456-891011-COST</billing-code>

<billing-line-item>Expensive Service PAYG - 2</billing-line-item>

<price>0.07</price>

</commerce>

<scheduling-priority>200%</scheduling-priority>

<completeness>50%</completeness>

<delivery>

<target>2011-08-11</target>

<expected>2011-08-11</expected>

</delivery>

<exceptionNotificationList>

<email>pjr@1060.org</email>

</exceptionNotificationList>

</meta>

</element>

</elements>

</space>

</spaces>

OK our story is nearly done. Now we understand how the metadata is being aggregated we can return to the kerching overlay implementation...

Kerching Reloaded

Recall that the overlay requests the metadata like this ...

//Get metadata for the target endpoint meta=context.source("active:LocalMetadataAggregation", IHDSInversion.class)

...and, since this metadata is a resource like any other, will probably come immediately from cache.

Remember that it asks for it as an IHDSInversion. But nobody told this to the metadata aggregator!

This is where transreption comes in. There's a layer1 transreptor which will transrept almost anything that can be turned into HDS into an IHDSInversion. Our aggregate user metadata is tree structured XML, which easily goes to HDS and then to IHDSInversion.

What is IHDSInversion? Well its just an inverted view of an HDS structure with a value-based map allowing us to find a node based upon its value. Its what books have at the back of them, you might be more familiar with its usual name of "index". (Build the javadoc for layer0 to see the class org.netkernel.layer0.representation.IHDSInversion).

So we can look in the index of the HDS structure to find a node that has a value that matches our target endpoint (remember that? If not go back to the top and see how we know which service has been resolved).

So we know the target, we know that the user metadata must contain an entry for that targeted endpoint. We look it up in constant time in the index, and we know that there can only be one since that's a guarantee of the metadata aggregation model we concocted. So we can ask for the first, and only, entry in the index list and, for good measure, find its parent, which is the general <endpoint> entry (see the live metadata again).

That's what we're doing here...

nl=meta.getNodes(target) n=nl.get(0).getParent()

Now we're not interested in the general stuff about this endpoint. So lets just get its "meta" node, if there is no <meta> then we throw an exception - someones trying to call a service which can't be billed as someone's forgotten to give it any billing metadata...

m=n.getFirstNode("meta")

if(m==null)

{ throw new Exception("No metadata specified for target endpoint $target")

}

Finally all the pieces are complete: we know the target, we have its metadata, we can construct a billing transaction and fire that off to a billing service...

t=new Date(); //Create Kerching message and make billing transaction message="""*****************KERCHING******************** Time: $t Payee Billing Id: $billingcode Credit To Project: ${m.getFirstValue("dev/projectid")} Billing Code: ${m.getFirstValue("commerce/billing-code")} Item: ${m.getFirstValue("commerce/billing-line-item")} Amount: ${m.getFirstValue("commerce/price")} ******************************************** """ println(message) //Here we'd construct a validated billing resource and issue a request to a billing service (probably with a backend DB). //Here's a dummy... /* billreq=context.createRequest("active:PAYGBilling") billreq.addArgumentByValue("transaction", sometransactionresource) context.issueRequestAsync("billreq") //Lets do it async so that the requestor is not delayed by the billing. Can always add a request listener to handle exceptions and deal with transaction failure out-of-band. */ //Clone the outer request and send the requestor on to the service. inner=outer.getIssuableClone() context.createResponseFrom(inner)

Finally we clone the outer request, so that the pluggable-overlay will then issue a request to the target service (the end-user requestor never knew we were here - well at least not until they get their usage bill at the end of the month!).

ROC Patterns

This application is showing a pattern of localized aggregation of contextually associated metadata resources. Its also showing how metadata really is a resource, just like anything else, and how it can be dynamically computed in the contextual ROC address space.

There's really no limit here. ROC metadata aggregation is scale invariant and can be done system-wide or even, with NKP, cloud-wide.

As we've seen in this last example it can be focused down to fine granularity to implement interesting internal architectural patterns inside a local application space. Or with aggregate spacial aggregates (metadata about metadata) can occupy the medium-scale to do patterns across selected parts of a system architecture.

We might be a little biased. But compare this to Annotations and remember this is not constrained to a specific language. I rest my case.

But there's still another untapped and, as yet, unillustrated dimension. We can use aggregate metadata to decide which services we want to expose (dynamically import) into an aggregation space. So we can use aggregate user metadata to make routing decisions (one of Brian's classic examples is "Give me a print service").

But it goes much much further.

ROC allows us to treat a software system itself as as resource. So I think you can see that we have all the pieces in place to have organic dynamic self-assembling architecture - which will combine and recombine based upon the needs and state of the system. All it needs is some meta-architecture and a few contextually associated resources (aka metadata). Now that's powerful, isn't it?

Los Alamos

I hope all my friends at Los Alamos National Lab are safe and not caught up in the fires. A couple of years back I had the great thrill (being a Physics nerd) of visiting Los Alamos and, the honour, to be there to consult/teach them NetKernel.

Bootcamp, Belgium and its Innate Affinity for ROC

We're steadily getting a sense that it would be a "good thing" to have the NetKernel Bootcamp in Belgium in October. This is rapidly moving from tentative, to a firm plan - still one or two things to work out.

In some feedback on a recent newsletter item, Tom Geudens made a beautiful observation...

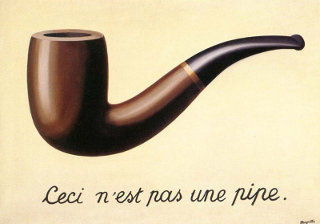

There's a major René Magritte exhibition that has just opened in the UK. One of his famous paintings is "Ceci n'est pas un Pipe" (shown left). If your French is not great, this translates as "This is not a Pipe".

Tom pointed out that, indeed, no this is not a pipe, it is a representation of a pipe!

Magritte (and Tom) is sharing a joke with the viewer. And the joke is that he recognises that we all have an instinctive sense of the axioms of ROC. The pipe is an abstract resource, the painting is a representation, the viewer is providing the context and the written phrase is the resource identifier which the viewer resolves.

So what better place to hold a bootcamp in ROC? It seems that Belgium has an innate cultural affinity for ROC.

Ceci n'est pas une publicité pour un bootcamp

Please drop me a note if this interests you so we can get more detail into plans.

Have a great weekend,

Comments

Please feel free to comment on the NetKernel Forum

Follow on Twitter:

@pjr1060 for day-to-day NK/ROC updates

@netkernel for announcements

@tab1060 for the hard-core stuff

To subscribe for news and alerts

Join the NetKernel Portal to get news, announcements and extra features.

NetKernel will ROC your world

|