|

NetKernel News Volume 2 Issue 5

November 19th 2010

What's new this week?

- Repository Updates

- Space Explorer - Logical vs Physical Endpoints

- Corporate NTLM HTTP Proxy

- Tom's Practical NetKernel Book

- ROC and Languages - Part 2: Imports and Extrinsic Functions

Catch up on last week's news here

Repository Updates

The following packages are available in both NKEE and NKSE repositories...

- http-client 2.1.1

- NTLM proxy support (see below)

- layer0 1.48.1

- request builder serialization support (used by mapper config in space explorer)

- improved javadoc for custom logging in INKFLocale

- fix to support multiple loggers within one module

- module-standard 1.35.1

- improved error handling for bady implemented preProcess on pluggable overlay

- expose Mapper config in state for use by space explorer

- nkse-dev-tools 1.26.1

- Space Explorer: overlay details now has link to wrapped space

- Space Explorer: mapper implementations show current config parameter in state

- nkse-doc-content 1.26.1

- (slight) improvement to logging documentation

- system-core 0.20.1

- updated MetaToXML transreptor to serialize HDS state as XML

The following package is available in the NKEE repository...

- nkee-dev-tools 0.16.1

- system healthcheck space integrity now points to new space explorer

Space Explorer - Logical vs Physical Endpoints

We've been receiving positive feedback from people using the new space explorer. I wanted to discuss one aspect of the explorer which is implicit to the tool but which I think needs highlighting.

Did you notice that when looking at a space in the explorer there are dark coloured rows and, usually beneath each there are several light rows. Here's an example from the Backend Fulcrum...

The HTTPTransport is the first row (dark), followed by the HTTPBridgeOverlay (dark), followed by the HTTPBridge (light) and so on.

Distinguishing Logical and Physical

A dark row indicates a physical endpoint - ie a physical instatiation of an endpoint at that point in the address space. If you click on a physical endpoint you will see the operational state that it may be exposing in its metadata.

A light row indicates a logical endpoint - ie a resolvable logical resource set. If you click on a logical endpoint you'll be given the endpoint metadata, such as its grammar etc, plus any documentation associated with it.

We're still refining the presentation models for some of these views but at the moment you could consider this as "two views in one".

If you concentrate only on the dark rows, you are seeing the ordered physical endpoints as declared in the module.xml. These are what you can also see in the static structure diagrams.

If you focus on the light rows, you are seeing the dynamically resolvable logical resource sets. This is a view of the sets of resources that can be resolved in this space.

By default (ie when you've not applied your own sorting in the table) the row order is significant since it is the resolution order. Any request entering this space will be resolved by the logical endpoints (light rows) in the order you see them.

A logical endpoint must always originate from a physical endpoint and you can see this in the table. A logical endpoint originates from the first physical endpoint row above it in the list.

A physical endpoint can be the provider of many logical endpoints. You can see this very clearly with the import endpoint in the figure above. It is importing layer1 which, in ROC terms, means it is "projecting" all of the logical endpoints of layer1 into this address space. Here we can see its providing the data scheme etc.

Going back to the top of the table we can see that the HTTPBridge is the first logical endpoint in the address space. If you click on it you'll see it has this grammar...

<choice>

<group>

<regex>http://[^/]*/([^\?]*)(\?.*)?</regex>

</group>

</choice>

</grammar>

So it resolves all requests for resources beginning with an http identifier. Which, by amazing coincidence is what the physical HTTPTransport issues as its resource requests when it receives an external HTTP request. So HTTPTransport requests only make a very short journey in the ROC address space before they find themselves being evaluated by the HTTPBridge. The HTTPBridge then issues a request into its internal "overlay space" along with injecting a new transient state space into the request scope but that's another story.

Corporate NTLM HTTP Proxy

The update to the http-client package in the Apposite repositories includes new NTLM proxy authentication support. NTLM is a Microsoft proprietary authentication mechanism used by Windows ISA proxy server - which, since it talks to Active Directory domain controllers, is prevalent in Windows corporate infrastructures.

Unfortunately it is an undocumented proprietary protocol and as such it requires dark magic to get Java-based systems to get through. Many projects like Maven give up and suggest using a proxy-bridge workaround (discussed below).

Its a long story but it turned out that when we recently migrated from the Apache Http Client version 3, to version 4, the previously built-in support for NTLM has been deprecated.

We've implemented a NTLMProxyEngine for the Apache 4 client based on the JCIFS library from the Samba project. In testing against a windows based NTLM proxy we now seem to be back in business and this is now available in the http-client update.

Since proxy routing is fairly important to be able to get updates from the Apposite repositories, we've slipstreamed the updated http-client into the NKEE download. Therefore, if you're one of those unlucky ones trapped inside an NTLM and so unable to get the http-client update from the repos(!) then just grab a new copy of NKEE here.

Proxy Bridge Workaround

Since NTLM is a closed world, we're not able to offer any long-term assurance; we're not even able to be sure that our testing is comprehensive. This is not a happy situation so we offer the following alternative approach should you want to keep it up your sleeve.

Many Java tools don't attempt NTLM proxy routing, they simply recommend installing a secondary standards compliant proxy which acts as a bridge to the corporate NTLM proxy.

The CNTLM proxy bridge looks like a good choice and works on both Windows and Linux...

Its pretty straightforward to get set up and you can run it as an app on your own machine, or if you're able to persuade your IT team, as a standalone daemon server for your development team to share.

With this in place all that's then needed is to change your NetKernel proxy settings to point to the CNTLM proxy, which will then transparently route you through the NTLM corporate proxy. One thing that's important though, make sure you don't leave any entries in the "Workstation" or "Domain" fields, since its setting values for these that triggers our http-client to try NTLM mode.

If you have any trouble you know who to call.

Internal Apposite Mirror

If you work in an organization that won't even allow its developers permission to let systems like NK download updates through a proxy, then fear not, there is still an answer. The apposite repositories can be mirrored locally inside your firewall, this guide explains how to rsync the NKSE repositories.

Apposite repositories are fully signed; packages are individually signed and the overall repository metadata is signed. The NetKernel apposite client authenticates both the overall repository's integrity and each and every package you download for install/update. It won't allow anything that's been tampered with so you can be sure that a local mirror is still a good source of genuine 1060 Research originated packages.

Please talk to us if you want to mirror the NKEE repository since we'll need to provide you with access etc.

Tom's Practical NetKernel Book

Tom Geudens has an updated 0.5 draft of his Practical NetKernel book. This features a new chapter showing how to integrate a 3rd party library API and expose it as a set of ROC services. He's chosen a worked example using MongoDB.

Tom is looking for feedback on progress so far, and also ideas for content. He can be contacted at tom (dot) geudens "at" hush {dot} ai

Thanks Tom!

ROC and Languages - Part 2: Imports and Extrinsic Functions

In the last gripping episode we saw how the transfer of code as state to a language runtime (code state transfer) introduces flexibility and liberates a system architect to step-outside of language and choose the dialect of code that most readily solves the problem.

In this instalment I want to show how the language runtime pattern changes the way we can think about imports.

Import

I'm willing to bet that every language you've ever coded in has an import statement of one kind or another.

So when you declare an import within a language what are you doing? You're indicating to the compiler, or interpreter, that within its accessible resources it should load the specified code and make the functions and/or objects referenceable to the code that follows the import.

Imports: A brief survey

Different languages have different models for what we mean by accessible resources. For example many Unix heritage languages, like Python and Ruby, have the concept of a library PATH; a set of directories within which an import reference will be attempted to be resolved and matched to a corresponding piece of code.

Java, coming out of Sun and having something of a Unix heritage, has a similar PATH model but it is a slightly more general implementation. By default the file system is abstracted into the CLASSPATH which comprises a set of directories or jar files from which compiled java classes may be resolved. But Java goes beyond this and allows you to introduce your own ClassLoaders such that imports can be resolved by arbitrary physical mechanisms. (NetKernel implements its own classloaders to provide a uniform and consistent relationship between the ROC address space and physical modular class libraries).

Languages like XSLT are even more general, all imports are referenced by URI and are delegated to a URIResolver - the language doesn't actually specify how or what this should do, although given its W3C heritage the inference is that it should issue "GET" requests (or their equivalent for protocols other than http: such as file: URIs).

Javascript, being a language originating within the context of the Web actually has two models, it is assumed that the execution context is extrinsic (the browser) and that extrinsic context will provide a global namespace of functions aggregated from all the script references in the HTML page. Javascript is security conscious and has no native internal import, but it does offer an indirect equivalent: you can eval() a script resource requested via AJAX.

Irrespective of which of these you're familiar with, one more observation, the imported code resource is always in the same language.

Language Runtime Imports

Now every language runtime that comes with NetKernel has the ability to import. Usually we've been able to abstract the language's internal mechanism away from the innate hard-coded assumptions of that language and ROCify it.

For example both active:python and active:ruby support a PATH model, but the path is a set of logical ROC addresses decoupled from a physical filesystem. Both languages have companion language libraries provided as an address space in a module. With their resource loading abstracted to use the ROC domain they have absolutely no idea that NetKernel's not Unix (sounds dangerously like GNU eh?).

The active:xslt runtime has a URIResolver, its just that its resolver is NetKernel, so xslt code has unlimited scope to work with resources in the ROC domain.

NetKernel's server-side active:javascript runtime supports imports of the Java class path. There's also a special global object called contextloader which is like the XSLT URI resolver and will import scripts as ROC resources. For good measure you can also use the context object to source and eval() scripts.

There's an immediate benefit to all of NetKernel's language runtimes in having their imports come through the ROC domain. They all acquire the innate dynamic reconfigurablity enabled by NetKernel's modularity. Also, wherever possible, they are implemented with compiling transreptors so that the imported code is JIT compiled.

So being a language in NetKernel's ROC address space is a friendly and flexible place to be. But there's more...

Why are you worried about imports? Is it maybe because you're still thinking inside the language?

Import or Extrinsic Functions?

Remember our example from last week, we showed how the language runtime pattern allows an Endpoint to execute arbitrary code in an arbitrary language and with NK's ability to accumulate transient representational state, the net cost of the pattern is negligible compared with a local function implementation.

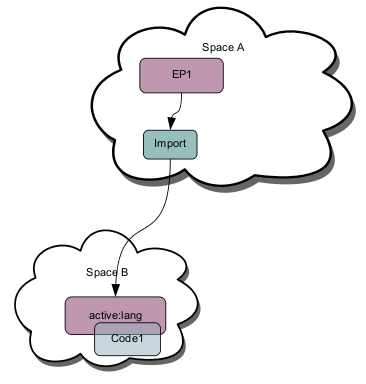

We closed last time with the following diagram and characterized this dynamic state as "code state transfer" to the runtime endpoint...

Now what if Code1 itself embodies another request with a reference for another resource? (As we've seen this could be implicit via an import statement - but lets not dwell on that case). It doesn't matter what the details of the actual request are, for example it might be...

var localState="CUST:12345678"

req=context.createRequest("active:balance")

req.addArgument("customer", localState)

var balance=context.issueRequest(req)

Which is NKF pseudo code to issue a request for the resource

active:balance+customer@CUST:12345678

For our discussion, it doesn't matter, we can consider this as the invocation of an extrinsic function f(x) where x is some internal state within the execution of Code1.

Does the language know anything about f(x)? No this function is outside of the current language - all Code1 knows is f(x)'s identity (we'll see later that it doesn't even need that).

So does SpaceB (the home of the language runtime and the immediate scope for the execution of Code1) import f(x)? No SpaceB doesn't know anything about f(x) either.

So does SpaceA (the home of Endpoint1 and the endpoint that originates Code1) implement f(x)? Not necessarily!

What if f(x) is implemented in SpaceC and SpaceA has an import endpoint to SpaceC. In this configuration here's what happens when Code1 initiates a request for f(x)...

So you can see that the concept of an "import" in ROC is extrinsic and is defined by the architectural arrangement of spaces.

Where's the function? I don't care I'm not running code I'm asking for a resource

What if (as we showed in last weeks discussion of active:lang requesting Code1) SpaceB doesn't import SpaceC, but maps f(x) to a remote request, say to an http resource...

f(x) -> https://bigbank.com/mybalance/x

(presumably with all necessary credentials, perhaps using OAuth!)

If we do this mapping does Code1 or the active:lang language runtime need to import an http-client or OAuth support? Nope, they're extrinsic, provided by the calling context.

Finally, just as last week's discussion showed that the execution of Code1 is, near as damn it, equivalent to a locally coded function, so the same argument holds for the extrinsic evaluation of f(x). Its as cheap to request f(x) extrinsically as it would have been to implement f(x) inside Code1, or to have used a classical language import of a library containing f(x).

Of course you also know without me saying, f(x) doesn't have to be written in the same language as Code1.

So with the extrinsic evaluation of functions offered by ROC, we are even more liberated from language. We are also liberated from execution on the current physical hardware platform (which we indirectly discussed here).

From Javascript to Extrinsic Contextual Functions

Hopefully you'll be seeing that invoking a resource request from within an arbitrary piece of code, is a generalization of how Javascript taps the extrinsic context of the browser HTML page evaluation. Only unlike the Javascript/Browser analogy, NetKernel is providing a normalized language neutral execution context and that context is multi-dimensional.

Extrinsic Recursion

For the computer scientists amongst you, consider that f(x) can be a recursive request - for example it could be...

active:lang+operator@Code1+x@foo

Recursion lies at the heart of computability. Later on we'll show that when you make recursion extrinsic you step out of the plain of language and some really interesting things happen. (If you can't wait here's a taster).

Status Check

The transfer of code as state to a language runtime ("code state transfer") introduces flexibility and liberates a system architect to step-outside of language and choose the dialect for code that most readily solves the problem. We saw in the last section that it also liberates a language from being bound to its functions. "Import" need have no relation to the chosen language, it is an extrinsic concept defined by spacial context.

Finally some practical thoughts on what we've learnt so far...

Being freed from language gives you freedom of choice. Which language you choose might be motivated by technical considerations: a given language has a particular capability (like Javascript can natively work with JSON). It might be practical, for example declaratively processing XML with XSLT can often be trivial compared with using APIs and document object models. To risk going all Zuckerberg, it might even be motivated by a social constraint: your developer knows and is comfortable with language X.

Standing back, with Turing equivalence and dynamic compiled transreption to bytecode, the choice of language is a secondary consideration.

Interface Normalization

You know how, when you go to a large web property and you see a URL like http://bigbank.com/youraccount.asp or for that matter http://bigbank.com/youraccount.jsp - you feel a sense of sadness? No, its not the negative balance of the account that's upsetting, its seeing reasonable and sane engineers enshrining the technological implementation into their public resource identifiers.

Don't switch off, this ain't your usual REST zealotry.

Frankly I understand that the platforms they've chosen actually pretty much require that the extension be used to bind to the execution. I find it deeply frustrating that this also just happens to play nicely to a vendors long-term lock-in of the customer. But alas even with the high intellectual level of software purchasers, time and again the IT industry finds ways to persuade customers to neglect to follow caveat emptor

Well even though ROC allows you to step out of language, be aware that as an architect you need to be diligent and be aware of the pitfalls of locking in your interface to your implementation. Here's some simple rules of thumb

- A local set of services can invoke one another by calling the runtime and expressing the code

- If those services are exposed publicly, for example as a library, you should normalize the interface and hide the code.

For example, active:lang+operator@Code1 is fine if that's local functionality but if Code1 is actually generating youraccount balance then please implement a normalized interface mapping something like this...

<config>

<endpoint>

<grammar>active:accountBalance</grammar>

</endpoint>

<request>

<identifier>active:lang</identifier>

<argument name="operator">Code1</argument>

</request>

</config>

<space> ...Code1 and active:lang are in here... </space>

</mapper>

Absolutely nobody but the developer cares what language its written in.

I've gone on long enough this week. I was hoping to cover the relationships between languages, functions and arguments, but that'll be for next time.

NetKernel West 2011 - Denver Area, April 2011

Its been two years since the last NetKernel conference, NK4 is finished and the NKEE release is out too. There are a ton of things to talk about. We don't have a precise space-time coordinate yet, but this much is certain: Denver Area USA, April 2011...

| NetKernel West 2011 | |

| Location: | Denver Area, USA |

| Time: | April 2011 |

Details on Venue, Dates, Logistics coming soon...

Have a great weekend.

Comments

Please feel free to comment on the NetKernel Forum

Follow on Twitter:

@pjr1060 for day-to-day NK/ROC updates

@netkernel for announcements

@tab1060 for the hard-core stuff

To subscribe for news and alerts

Join the NetKernel Portal to get news, announcements and extra features.

NetKernel will ROC your world

|